AllTrails Says "Moderate." Cascades Terrain Doesn't Care.

It was a "Moderate" trail. 4.2 stars. 847 reviews. My daughter was 10. The dog was a lab mix who'd done plenty of miles. We were fine.

Until we weren't.

The scramble came out of nowhere — or rather, it came out of exactly where it had always been, sitting right there in the terrain, waiting for every reviewer who rated that trail "fun!" from the parking lot to the first viewpoint to obscure it. Class 3. Hands on rock. Exposure on the left. My daughter looking at me with exactly the expression you never want to see when you're the one who planned the trip.

That day rewired how I think about trail databases. Ten years moving freight across the Pacific Northwest trained me to trust documentation that's been stress-tested — bills of lading, load specifications, route confirmations. A rating system that doesn't define its own terms is not documentation. It's noise with a star rating attached.

Here's what I've learned since, and what I now run through before any trail commitment.

What "Moderate" Actually Measures (And What It Doesn't)

AllTrails assigns difficulty using a combination of distance, total elevation gain, and route type — then layers crowd-sourced user ratings on top. Their published methodology does include some terrain-type flags, and experienced contributors sometimes tag routes as "scramble" or note exposed sections in reviews. That's better than nothing.

But here's the problem I keep running into on Cascades terrain: even when technical terrain is tagged or mentioned, it doesn't change the official difficulty label. A trail with a Class 3 scramble section tagged in the comments can still display "Moderate" at the top of the listing. The structural difficulty rating and the terrain reality don't talk to each other in a way that surfaces at the point of decision.

What the difficulty label still does not reliably capture:

- Route-finding complexity

- Exposure — sustained drop on one or both sides of trail

- Class 2 or Class 3 scrambling in the Yosemite Decimal System

- Water crossing depth or frequency

- Trail surface quality — hardpacked vs. scree vs. root tangle

- Seasonal condition deltas

The WTA (Washington Trails Association) uses a different schema — a boot-rating system based on mileage, elevation, and trail condition as assessed by staff and trip reporters. Better calibrated for the Cascades specifically, but still not standardized against YDS terrain classification.

USFS and NPS maintain their own rating language, which typically defaults to Easy / Moderate / Strenuous. In my experience, agency ratings for backcountry trails often lag behind changing conditions — washed-out bridges, reroutes, degraded tread — because the resources to update them aren't always there.

The result: a trail with a Class 3 scramble section can be rated "Moderate" on all three platforms simultaneously. Not because anyone is lying. Because none of them define "Moderate" in a way that formally excludes it, and none of them share a common terrain classification standard.

The Yosemite Decimal System Gap

The YDS is the closest thing American hiking has to a universal terrain language. Class 1 is hiking. Class 2 is off-trail rough travel, possibly some use of hands. Class 3 is hands-on-rock, real exposure, fall risk. Class 4 is technical enough that most recreational hikers should be roped. Class 5 is climbing.

The gap in trail databases: YDS is only consistently applied to technical climbing routes. Everything below Class 5 gets folded into generic trail ratings with no terrain classification. A Class 3 scramble — where a fall could injure or kill you — is footnoted, buried in comments, or simply omitted from the official difficulty label.

This is exactly how families end up above treeline in trail runners. Not because they're reckless. Because the database told them "Moderate" and they trusted it — because there was no reason not to.

The fix isn't to abandon the databases. It's to treat them as a starting point, not a verdict.

The Vertical Gain-Per-Mile Test

My logistics background gave me this reflex: when you don't trust a label, calculate the underlying number yourself.

Total elevation gain divided by total mileage gives you gain per mile. My working breakdown:

| Gain/Mile | What It Actually Means |

|---|---|

| Under 100 ft | Flat. Genuinely flat. Accessible to most people in most conditions. |

| 100–200 ft | Rolling terrain. Some sustained effort on uphills. |

| 200–300 ft | Moderate sustained climb. You'll feel it. |

| Over 300 ft | Strenuous by any honest measure. |

| Over 400 ft | Plan around it, not through it. |

Most trail databases give you total gain and total mileage. Do the division. Don't accept the label.

The other number worth pulling: elevation at the summit or high point. A trail that gains 1,400 feet but tops out at 4,200 feet is a different problem than one topping out at 7,800 feet — both for weather exposure and for the terrain type you're likely crossing to get there.

The Cross-Referencing Protocol

This is the sequence I run for any trail I haven't done in the current season:

Step 1: AllTrails for baseline geometry.

Total distance, total gain, starting elevation. Check the photos tab — crowd-sourced photos will show you more terrain truth than the official description. Read the most recent reviews sorted by date, not helpfulness. Look for: "use trail," "cairns," "exposed," "snow," "ice," "crossing." Any one of those words escalates Step 2.

Step 2: WTA trip reports for terrain language.

Go to wta.org. Search the trail. Sort by most recent. WTA trip reporters write in plain English and they were actually there. They will tell you "the last half mile to the summit is a loose scramble with significant exposure" in a way the AllTrails description never will. No reports from the last 14 days? Treat the trail as unknown for current conditions.

Step 3: Agency page for access and closures.

USFS district page, NPS page, the managing agency's current conditions update. This is where you find out the road to the trailhead is still gated, the bridge washed out in February, the permit window changed, or there's a fire closure you didn't know about. AllTrails is notably slow to update access information relative to conditions on the ground. Go to the source.

Step 4: CalTopo or Gaia GPS for the topo profile.

Pull the trail on a topographic map. Look at the contour lines. A trail AllTrails describes as "rolling" with contour lines packed that tight is not rolling — it's a sustained grind. Look for ridge traversals (exposed by definition), creek crossing indicators (blue lines that cross your route), and north-facing aspects (slower to clear snow in spring). Five minutes. The single most honest picture of what the terrain will actually demand.

These four steps account for terrain, conditions, and access—the full picture that reality-checked spring selections require.

Spring-Specific Rating Rot

The bulk of trail reviews on any popular Cascades route were written between June and September. That's when the photos were taken. That's when the trail was driest, the markers most visible, the crossings most manageable. The difficulty rating that emerges from aggregating that data reflects summer conditions — because that's the data that exists in volume.

In March, you are not in that data.

A "Moderate" trail in March may have:

- Snow-covered tread from the trailhead or mid-route, depending on elevation

- Ice on north-facing aspects regardless of air temperature

- Swollen creek crossings from snowmelt — USGS stream gauge data will tell you actual cubic-feet-per-second at named crossings if you look it up

- Zero visible trail markers, which converts a straightforward trail into a navigation exercise

- A hard turnaround time imposed by afternoon ice refreezing or incoming weather

The database didn't lie. It reflected the conditions that most of its contributors experienced. That's just not the conditions you're hiking in. That gap is on you to close — the database won't do it automatically.

This is peak rating-mismatch season—exactly when spring's hazards make these mismatches most dangerous. People are pulling up AllTrails right now planning Easter weekend hikes, trusting numbers built from a July aggregate. This is the window where the gap between what the database says and what the mountain delivers is at its widest.

The Three Questions

Before any trail, I run three questions:

1. What's the gain per mile?

Calculate it. Don't read the label.

2. Are there WTA trip reports from the last 14 days?

If not, you're working from summer data. Proceed accordingly.

3. Does the topo show any ridge traversal or creek crossings?

If yes, in March: re-evaluate the whole day. Not the trail. The day.

That's the full methodology. Twenty minutes of prep for a trail you haven't done recently in this season. Twenty minutes is not a lot to pay to know what you're actually walking into.

The databases are useful tools. They're just tools. They are not the mountain. The mountain has not read your AllTrails review.

Plan accordingly.

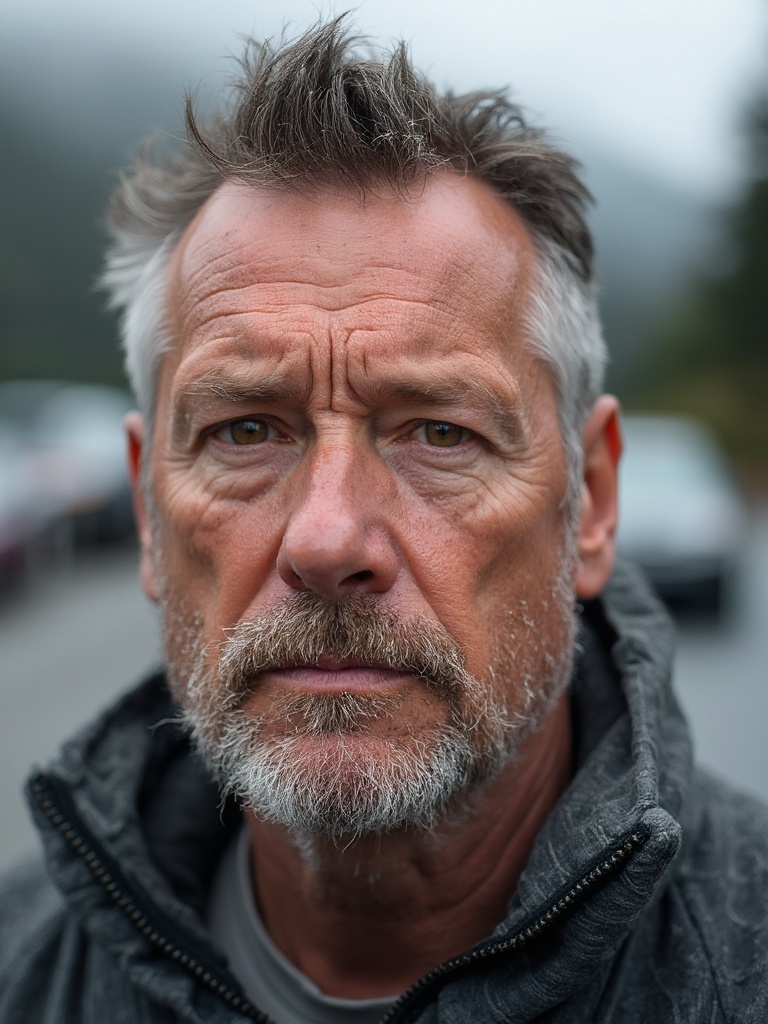

Garrett Vance writes about hiking the Cascades with the same obsessive documentation standards he applied to Pacific Northwest logistics management. He has not trusted a trail rating at face value since that scramble with his daughter and his dog. Neither should you.